Building a basic chatbot that simply responds to user input is relatively straightforward. However, designing a production-ready AI agent that can reliably retrieve company knowledge, search both structured and unstructured data, interact with databases, manage portfolio information, and carry out real-world actions such as booking consultations requires a far more advanced and structured approach.

To achieve this, I implemented a graph-based, tool-driven architecture using LangGraph integrated with FastAPI. This system introduces persistent state management, conditional workflow routing, and controlled tool execution, allowing the language model to move beyond simple text generation and operate as a stateful, multi-step decision-making agent.The result is a scalable, production-ready assistant capable of handling complex business workflows with accuracy, traceability, and reliability.

Before diving into the architecture, it is essential to define what makes a system “agentic.” Unlike traditional chatbots, where a user guides every interaction, agentic AI possesses autonomy. You provide a goal, and the system determines the steps needed to achieve it. This transition is defined by four foundational pillars:

The agent doesn’t wait for step-by-step instructions. Once it understands the objective, it decides what to do next on its own. It evaluates options, makes judgement calls, and moves forward without needing constant guidance or correction.

It stays focused on the end goal. As it works, it checks whether its actions are actually producing results. If something isn’t working, it adjusts its approach, tries a different angle, or breaks the problem down in a new way until it moves closer to the desired outcome.

The agent pays attention to what’s happening around it. Whether it’s new data entering a database, changes in user behaviour, or shifts in system conditions, it detects those updates and responds accordingly. It doesn’t operate in isolation, it reacts to its environment in real time.

It learns from experience. By remembering past actions, outcomes, and context, it improves how it handles similar situations in the future. Over time, its decisions become more informed and efficient because it builds on what it has already seen and done. To implement this, I developed a graph-based, tool-driven architecture using LangGraph integrated with FastAPI.

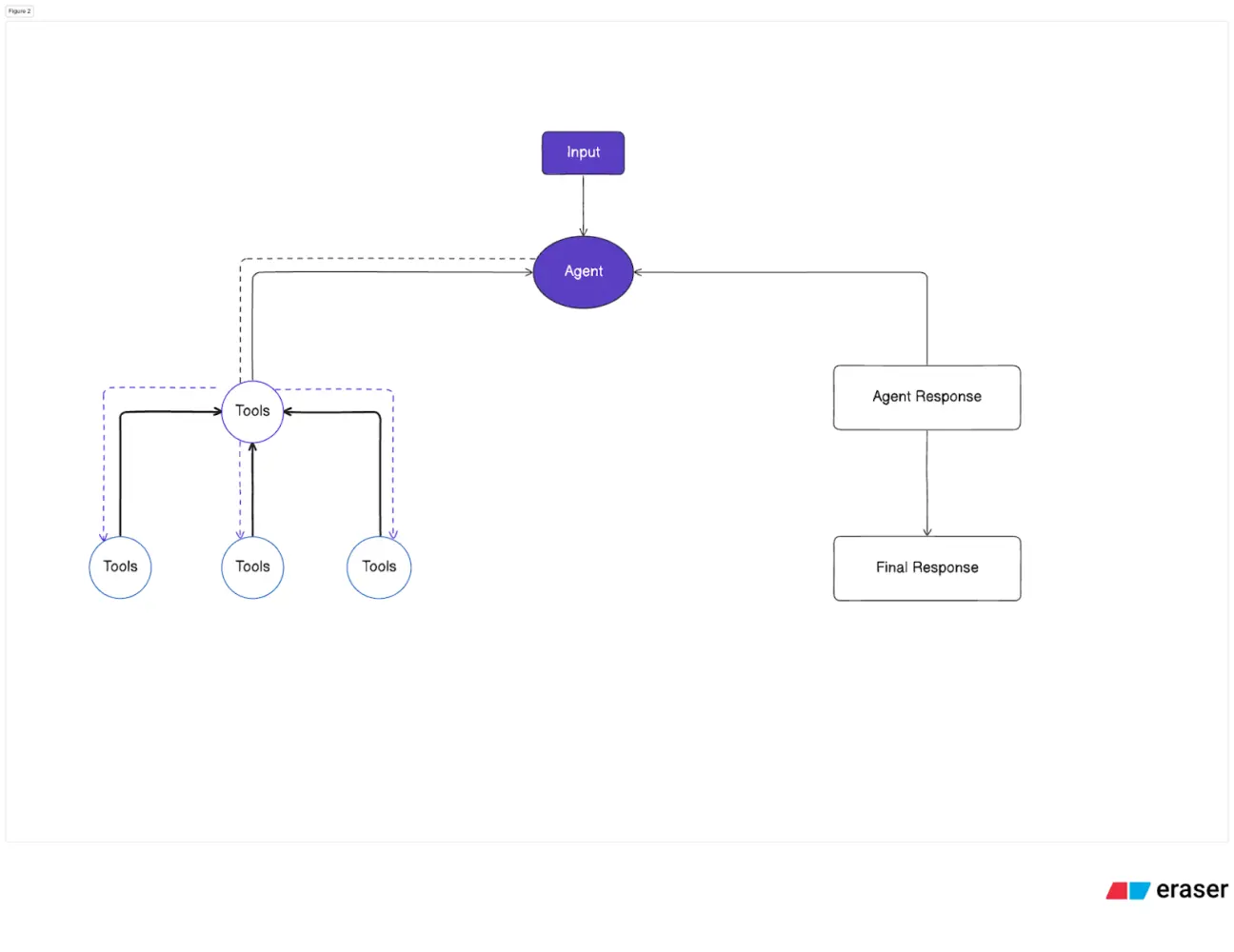

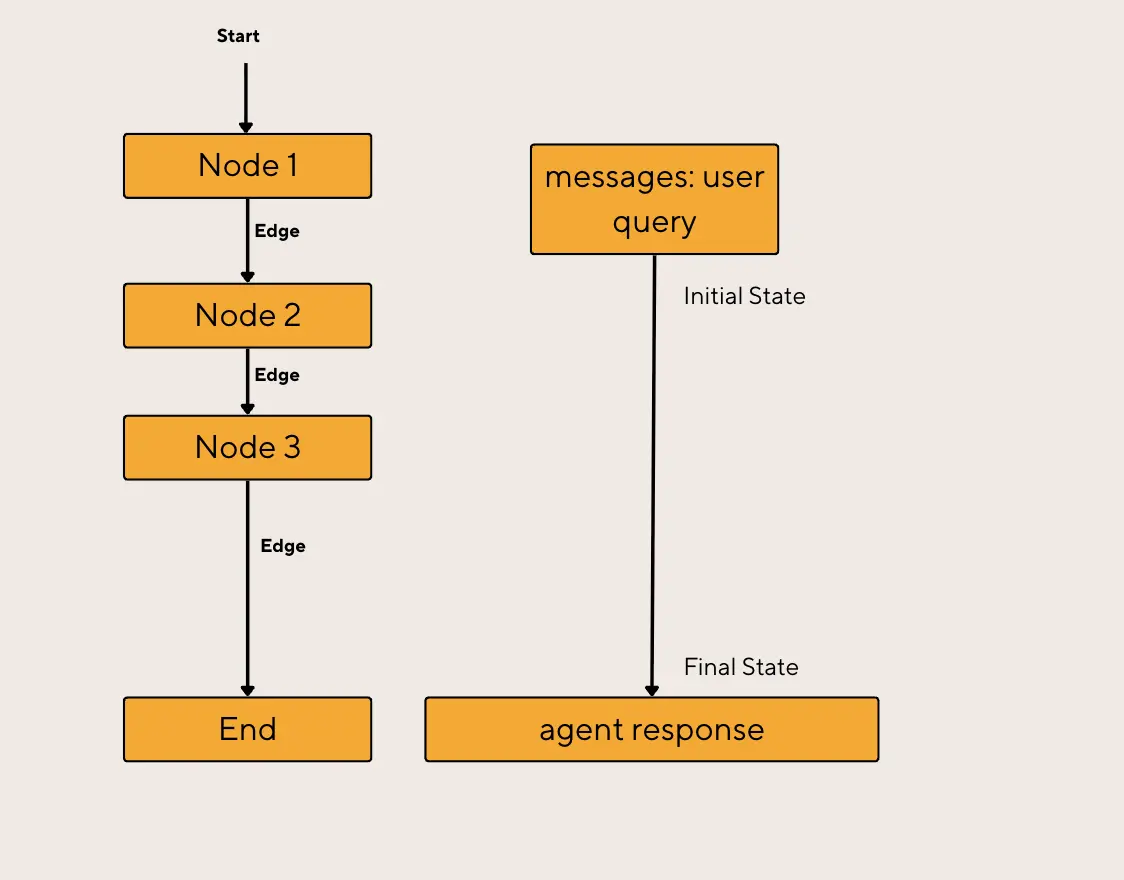

Traditional AI “chains” move in a straight line (Input → AI → Output). This setup breaks if the AI makes a mistake or requires more information. LangGraph, on the other hand, is a graph-based workflow made up of nodes and edges. The nodes carry out functionality within the workflow, while the edges control its direction.

The following diagram illustrates how LangGraph works at a high level.

A LangGraph agent receives input, which may come from a user or another LangGraph agent. Typically, an LLM-based agent processes the input and decides whether one or more tools need to be called. Alternatively, it may generate a response directly and move on to the next stage in the graph.

If the agent chooses to call tools, the tool processes the request and returns the result to the agent. The agent then uses this output to generate its final response, which is passed to the next step in the workflow.

LangGraph operates like a flowchart (a graph) with three main components:

Nodes:

Think of nodes as the individual steps in the workflow. Each one represents a specific action, such as calling an AI model, running a tool, checking a database, or validating an answer. Rather than everything happening in one long sequence, the process is broken into these clear, manageable units of work.

Edges:

Edges are the connections between those steps. They determine what happens next. Sometimes the path is straightforward, but other times it depends on the outcome of the previous step. For example, if the AI detects missing information, the system might route the flow towards asking a follow-up question instead of moving ahead. In that sense, the flow isn’t rigid, but one capable of adapting based on the results it has access to.

State:

State is the shared memory that keeps everything aligned. It holds the conversation history, intermediate results, user inputs, and any data collected along the way. Every node can read from it and update it, so the system always has full context of what’s happened so far.

Because it’s a graph, the agent can loop back to fix errors or request more details until the task is complete.

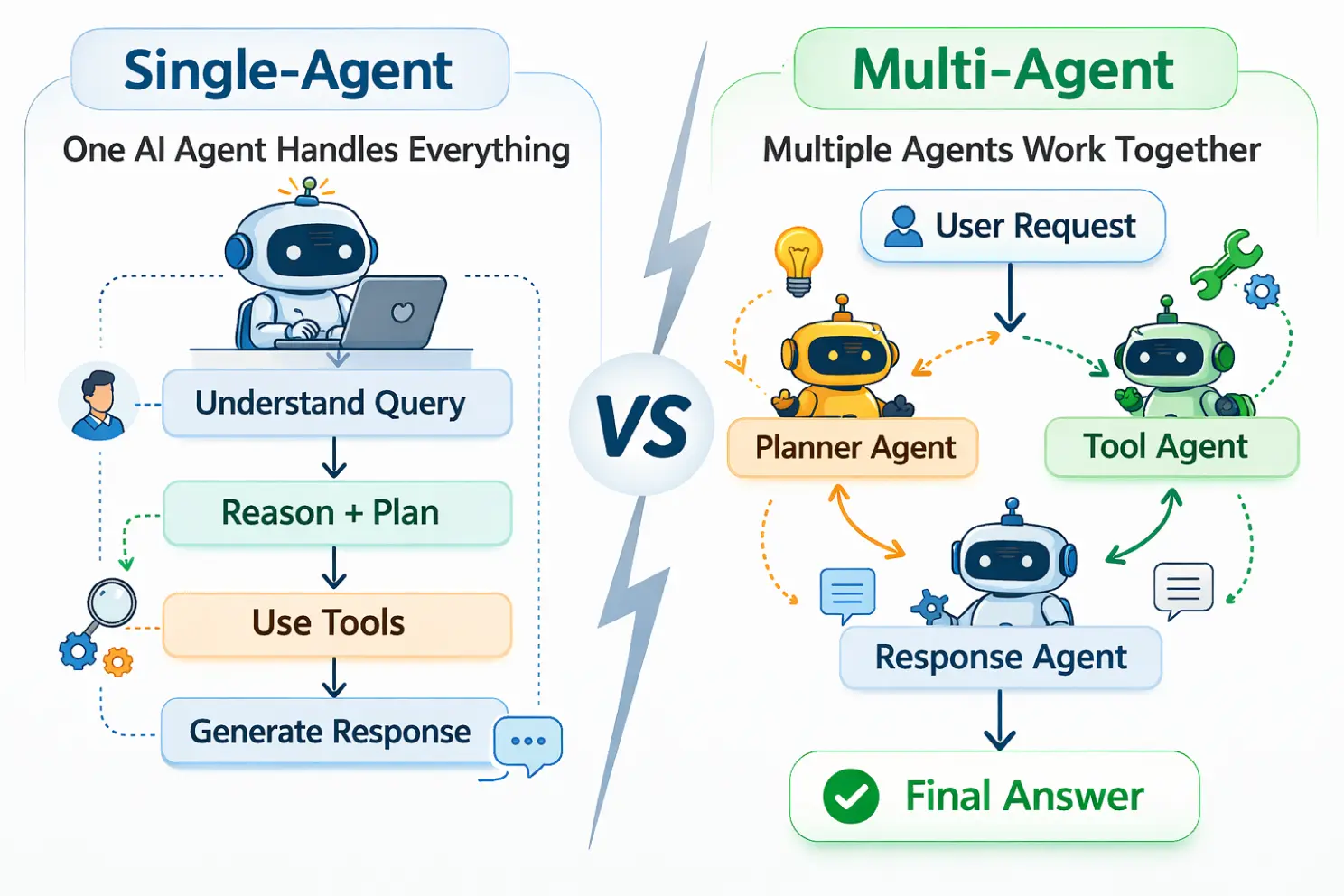

One of the most important architectural decisions when designing an AI agent is choosing between a single, centralised system and a distributed group of specialised agents. The difference isn’t just technical ,it also affects performance, cost, complexity, and reliability.

For this project, I opted for a Single-Agent System. This architecture consolidates reasoning, memory, and tool execution into one instance. It relies on four core components: an LLM Reasoning Engine, a Memory System for context-aware behaviour, a Tool Integration Layer, and a Planning Module to decompose tasks.

The advantages of a single-agent system compared to a multi-agent system are outlined below:

In a multi-agent system, tasks often move from one agent to another, for example, from a planner to a researcher to an executor. Each hand-off requires extra processing, additional LLM calls, and internal communication. That coordination takes time. Even small delays accumulate when multiple agents are involved. With a single agent handling everything internally, decisions happen in one continuous flow, significantly reducing response time and making the system feel faster and more seamless.

Each interaction between agents consumes tokens, especially when they need to share context or summarise information for the next agent in the chain. Over time, this back-and-forth can become expensive. By consolidating reasoning into one agent, there are fewer redundant exchanges and less repeated context passing. The result is more efficient token usage and lower operational costs, particularly at scale.

When a system includes multiple agents, understanding why something went wrong can mean tracing logic across several independent components. You may need to inspect how one agent interpreted instructions, how another responded, and whether context was passed correctly between them. In a single-agent setup, the entire reasoning process exists in one place. This centralised flow makes it easier to monitor behaviour, diagnose issues, and improve performance without untangling inter-agent dependencies.

For structured business processes, such as appointment booking, transaction lookups, or portfolio queries, clarity and predictability are essential. A single agent maintains one consistent understanding of the task from start to finish. In contrast, multiple agents can sometimes interpret goals slightly differently, leading to inconsistencies or what’s often described as “mental model drift.” With a centralised approach, control remains tight, execution is more consistent, and outcomes are easier to standardise.

The table below illustrates the differences between Single-Agent and Multi-Agent systems:

| Aspect | Single-Agent (My Choice) | Multi-Agent |

|---|---|---|

| Architecture | One unified reasoning pathway | Multiple specialised agents |

| Complexity | Low to moderate | High (requires orchestration) |

| Best For | Linear workflows, speed, & cost | Security boundaries, multi-domain scaling |

| Cost | Predictable & efficient | Higher (multiple LLM calls) |

Before you initialise a tool, it’s important to understand how the tool should work and ensure it is set up correctly. You can refer to the LangChain Official Docs for guidance.

To make the agent useful for real-world business applications and enable it to perform various operations, I integrated several specialised tools into the chatbot:

The Knowledge Base acts as the foundational information layer of the system. It stores company data in embedded document form, allowing the agent to retrieve relevant information using vector similarity search rather than simple keyword matching. Queries are understood semantically, meaning the system can identify contextually relevant content even if the exact wording differs. By comparing the meaning of a user’s question with the stored documents, it surfaces the most relevant information quickly and accurately. The Knowledge Base serves as the primary source of truth for company-related queries, ensuring responses are grounded in verified internal documentation rather than generated assumptions.

The Scraper Tool works alongside vector embeddings to ensure the system retrieves both structured and meaning-based information effectively. While structured data is stored in PostgreSQL with a strict relational schema covering service descriptions, portfolio details, and verified official URLs, vector embeddings enhance search capabilities across the content. This allows semantic matching, identifying relevant records even when the user’s wording doesn’t exactly match the database entries. By combining relational storage with embedding-based similarity search, the system benefits from both precision and flexibility. All links are retrieved directly from the database through the tools, preventing hallucinated or broken URLs and ensuring responses are grounded in verified, real-time data.

The Booking System is a dedicated tool within the agent’s toolkit, designed to handle consultation requests in a controlled and reliable way. It connects to an external API to submit bookings, but only through a structured workflow rather than direct execution. The process follows multiple steps: collecting required details, validating information, presenting a final summary, and pausing for user confirmation. Nothing is sent externally until the user explicitly approves it. This strict confirmation sequence ensures accuracy, prevents accidental submissions, and keeps the user fully in control.

The Portfolio and Service Tools provide accurate, up-to-date information by fetching data directly from APIs. Rather than relying on static content, these tools dynamically retrieve portfolio entries and detailed service descriptions in real time. They can handle both broad questions, such as an overview of offerings, and precise lookups, such as a specific project or service request. Before generating a response, the system retrieves the relevant structured data through the tools, ensuring information is organised, consistent, and grounded in verified sources rather than improvised by the model. This structured retrieval process maintains accuracy and clarity in every answer.

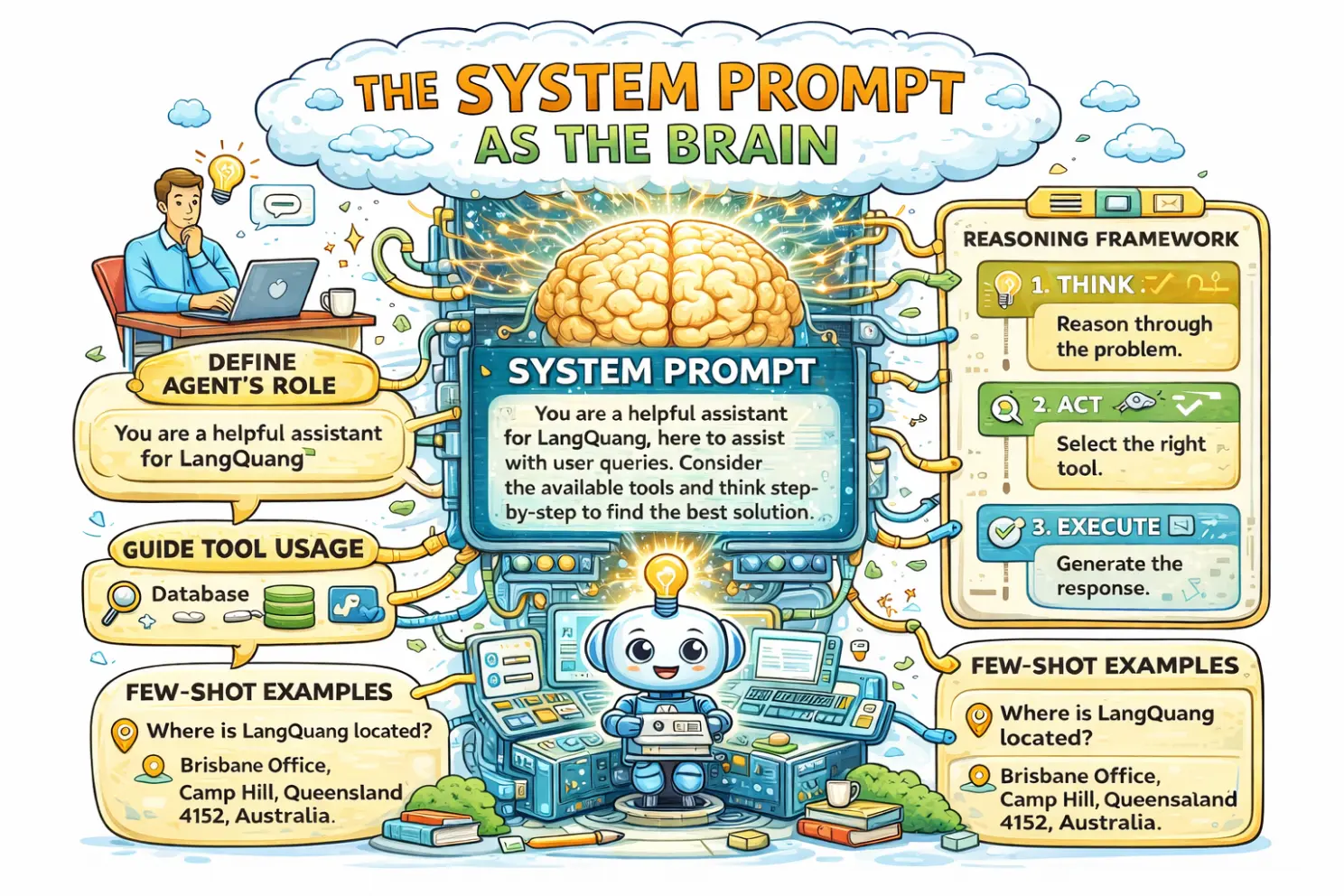

One of my biggest takeaways was realising that the system prompt is the brain of the operation. In agent-based systems, decisions such as tool usage and response generation are directly driven by the prompt. The model’s behaviour depends on how clearly and effectively the prompt is designed.

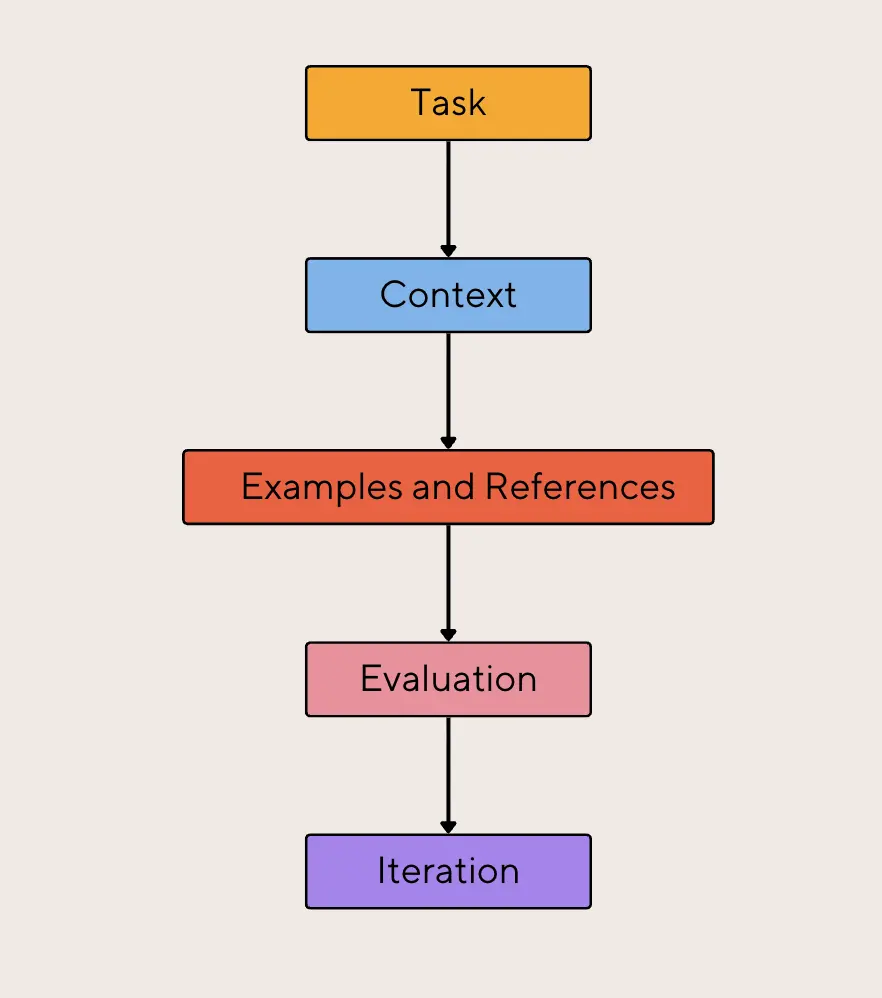

To consistently achieve the desired outputs from large language models, it is essential to understand the prompt framework. A well-structured prompt typically includes five core components:

The task is the core instruction from the user ,essentially the question being asked or the goal that needs to be completed. It defines exactly what the model is expected to produce, whether that’s an explanation, summary, analysis, or structured output. When the task is written clearly and specifically, there’s less room for misunderstanding, which leads to more accurate and relevant responses.

Context provides the background information the model needs to answer correctly. This could include relevant details, constraints, data, or situational information. The key is to include only what’s necessary. Too little context can result in vague or incomplete answers, while too much unrelated information can distract the model and reduce response quality. Well-chosen context helps keep the output focused and aligned with the user’s intent.

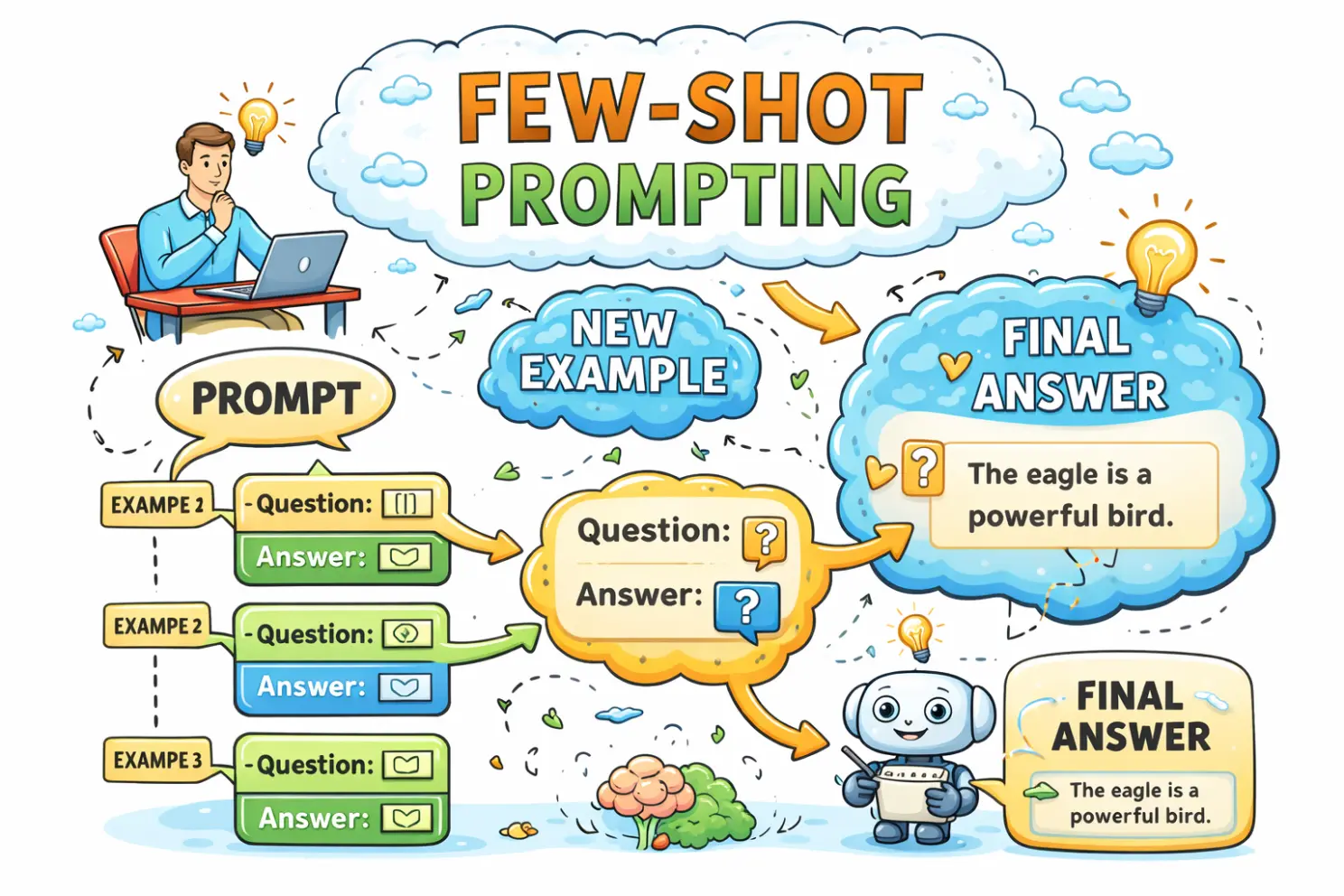

Including examples of the desired output is known as few-shot prompting, which helps guide the model toward the correct format, tone, and structure. By showing 3–5 well-designed examples, you give the model a clear pattern to follow. This technique can significantly improve performance because it reduces ambiguity about expectations and demonstrates exactly what a good response looks like.

After the model generates a response, it’s important to review it against predefined criteria. This evaluation step ensures the output meets the original requirements. For example: does it match the intended tone? Is the formatting correct? Does it fully address the task? Are all constraints respected? Evaluating responses helps maintain consistency and quality, particularly in production systems.

Prompt engineering is rarely perfect on the first attempt. It’s an ongoing process of refinement. By testing, analysing results, and making small adjustments, you can steadily improve performance. Even minor changes in wording, structure, or examples can lead to noticeable improvements in output quality. Over time, this iterative approach produces more reliable and effective prompts.

For the LangQuang chatbot, several prompting techniques were used to improve the accuracy, relevance, and quality of the responses generated by the language model. These techniques help guide the model to better understand user queries and provide more structured and useful outputs. The main prompting techniques applied are outlined below:

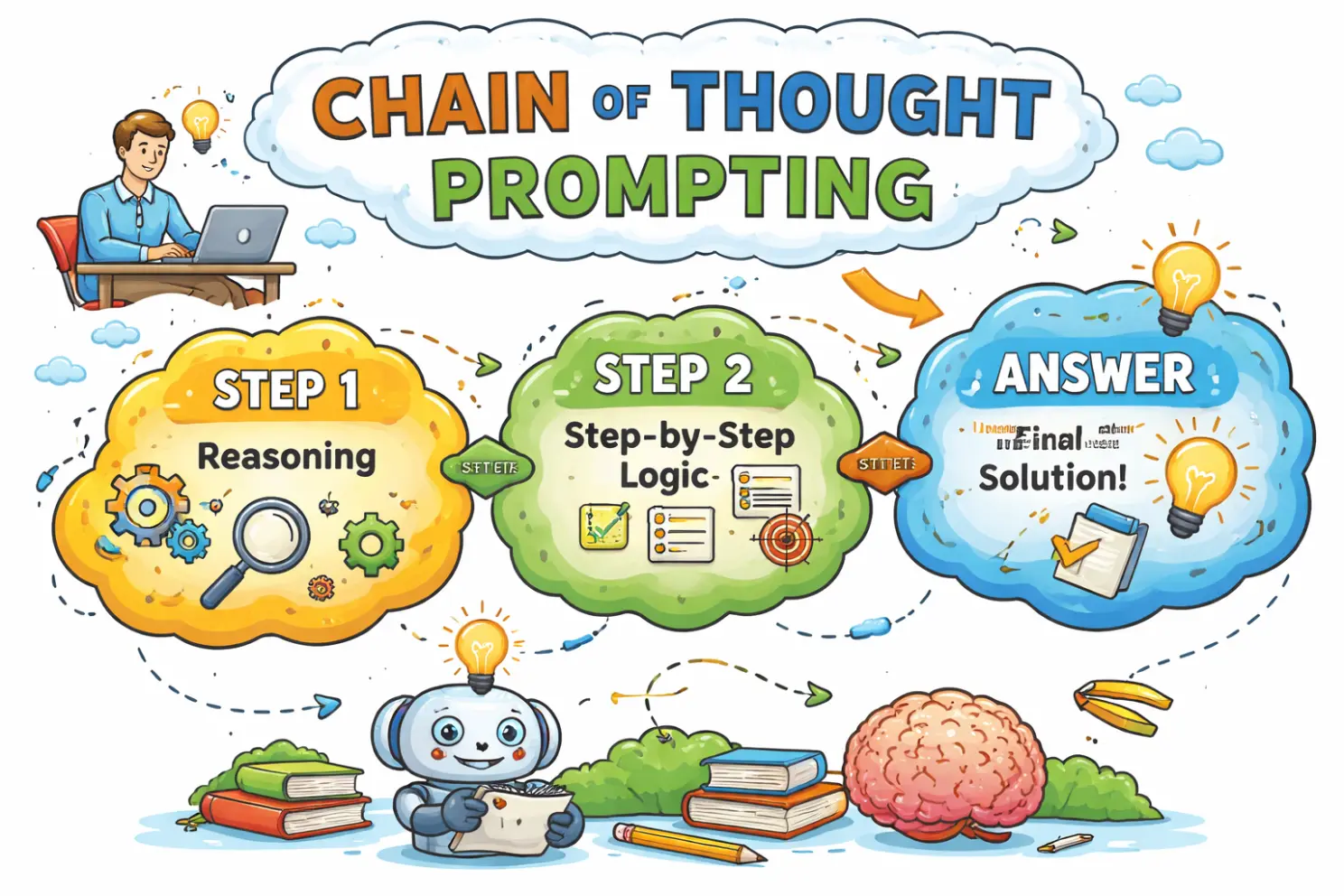

Chain-of-Thought prompting was used to guide the model to reason step by step before producing a final response. This technique encourages the model to “think before it acts,” which helps reduce mistakes and improves logical consistency in responses. Additionally, reasoning frameworks such as ReAct (Reason + Act) were employed to allow the model to analyse a problem first and then decide on the appropriate action, such as selecting a tool or generating a response.

Few-shot prompting was implemented by providing carefully designed examples within the prompt. These examples demonstrate the expected format and style of responses, enabling the model to better understand the task. In the LangQuang chatbot, tailored examples were mainly included for portfolio and service-related content to improve relevance, consistency, and accuracy of the generated outputs.

To manage token limits and reduce computational costs, token optimisation strategies were applied. This included summarising long conversation histories and breaking large inputs into smaller chunks (chunking). These methods help maintain efficiency while ensuring the model retains the most important information needed to generate accurate responses.

Explicit instructions and constraints were used to control the tone and structure of the chatbot’s responses. By clearly specifying how the model should respond, the chatbot was guided to produce more consistent and organised outputs. For example, instructions such as “Summarise in three bullet points” or “Use a professional tone” were included. These prompts help ensure responses remain clear, well-structured, and aligned with the intended communication style. Establishing clear boundaries in the prompts also helps produce predictable and structured responses.

In the Langquang chatbot, I used the system prompt as the central component that defines the agent’s identity, behaviour, and decision-making process. The system prompt acts as the “brain” of the chatbot, guiding how the model interprets user requests and generates responses.

First, I clearly defined the role of the agent within the system prompt. For example, the prompt may include instructions such as: “You are a helpful assistant for LangQuang.” This helps the model understand its purpose and ensures responses remain aligned with the chatbot’s objectives.

I also included clear instructions on how and when the chatbot should use available tools. Detailed tool descriptions, particularly through well-written docstrings, help the model understand the purpose of each tool. This enables the agent to decide when to retrieve information from the database, when to rely on its internal knowledge, and when to call external tools.

To improve decision-making, I applied structured reasoning approaches such as the ReAct (Reason + Act) framework. This framework encourages the model to first think through the problem, then select the appropriate tool, and finally execute the required action to generate an accurate response.

I also incorporated few-shot examples within the prompt to enhance the chatbot’s performance. These examples demonstrate how the chatbot should respond to specific types of user queries. Well-designed sample conversations help the model learn the expected response style and structure.

In many cases, including just a few high-quality examples can significantly improve the model’s accuracy. For instance, using around three strong examples can increase response accuracy from below 20% to over 50%, as the model gains a clearer understanding of the expected behaviour.

The Langquang chatbot follows a structured workflow when processing user requests.

The user requests a specific service.

The system analyses the request and identifies the user’s intent.

The agent selects and calls the appropriate service tool.

The required data is retrieved from the database.

The correct URL related to the service is fetched.

Finally, the chatbot returns a formatted HTML link along with the response to the user.

This workflow ensures the chatbot can efficiently process requests, retrieve relevant information, and deliver clear, useful responses to users.

Using LangGraph makes the workflow easy to manage and extend. The system clearly separates responsibilities across components: FastAPI handles incoming requests and authentication, LangGraph manages orchestration and conditional workflows, tools manage external integrations and business logic, the database stores scraped and structured content, and the state layer tracks conversation context across sessions.

Because each component has a defined role, the architecture remains modular, maintainable, and production-ready. For example, if I need to introduce a human review step before booking confirmation, I can simply add a new node and conditional edge to the graph. Likewise, if a tool fails, the workflow can loop, retry, or route to an alternative path without breaking the system.

Key Features of the Agent

This agentic system is designed for reliability and production use, featuring:

Cyclic Reasoning: The ability to loop through steps, allowing the model to self-correct if a tool returns an error or if additional information is needed.

State Persistence: Conversation history and intermediate results are stored and reconstructed for each session, ensuring continuity across interactions.

Human-in-the-Loop: The architecture includes interrupt points for approval before sensitive actions.

Structured Output: Tools return formatted data, enabling clean downstream processing without the need for manual parsing.

Several tools were integrated into the LangQuang chatbot to enable efficient information retrieval and support real business operations:

Knowledge Base Tool: This tool retrieves information from embedded company documents, allowing the chatbot to access internal knowledge and provide accurate, context-relevant responses.

DB Data Tool: This tool provides access to structured data scraped from the company website. It enables the chatbot to quickly retrieve organised information such as services, descriptions, and relevant links.

External APIs (Portfolio and Booking Tools): External APIs were integrated to support portfolio display and booking functionalities. These tools allow the chatbot to interact with external systems and assist users with portfolio enquiries or service bookings.

Custom Business Tools (Service Tools): Custom tools were developed to manage the service catalogue and portfolio-related functions. These tools help the chatbot retrieve service details and present them clearly to users.

Together, these tools transform the agent from a purely conversational model into an action-oriented system capable of operating within real business workflows.

Transitioning from linear chains to graph-based agent architectures represents a significant advancement in AI system design. By combining LangGraph’s structured workflow management, FastAPI’s backend capabilities, persistent state handling, and carefully engineered tool integration, I built a reliable, scalable, and production-ready AI assistant.

This architecture demonstrates how structured state management, strict tool governance, and precise prompt engineering can transform a language model into a controlled, decision-making agent capable of navigating complex business environments and executing meaningful real-world actions.

Let us help you create a high-performing digital experience that drives measurable results and long-term growth.

Brisbane Office

Camp Hill, Queensland 4152, Australia

Lalitpur 44600

Bhanimandal Ashram Galli

Phone

(+61) 415 505 292

Services

Solutions

Booking & Scheduling Systems

Client Management Systems (CRM)

Custom Dashboards & Portals

E-Commerce Enhancements

Automation Tools

Reporting Templates

In the spirit of reconciliation, LangQuang acknowledges the Traditional Custodians of Country across Australia and their enduring connection to land, sea, and community. We pay respect to their Elders past and present and extend that respect to all First Nations peoples with disability, their families, and careers.

© 2026 Langquang. All rights reserved.

Privacy Policy